How Crawl Budget Impacts SEO: Myths and Best Practices

Introduction to Crawl Budget

Search engine optimization (SEO) is a multi-faceted discipline. One of its more technical, yet crucial aspects is crawl budget optimization. While often overlooked, crawl budget can play a significant role in how well your site performs in organic search.

In this article, we’ll explore what crawl budget really is, how it works, debunk some of the most common myths around it, and provide practical strategies to make sure every dollar of your crawl budget is used effectively.

How Googlebot Crawls the Web

Google uses a crawler called Googlebot to discover and index content from across the internet. It uses a variety of signals to decide which pages to crawl, when, and how often. These signals include:

- Internal linking structure

- Sitemap files

- Backlinks from other websites

- Site popularity and freshness

- Server performance

When Googlebot visits a website, it requests pages, parses them, follows links, and queues discovered URLs for later crawling. This entire process is subject to crawl constraints—namely the crawl rate limit and crawl demand.

What Is Crawl Budget, Exactly?

Google defines crawl budget as the combination of:

- Crawl Rate Limit: How many connections Googlebot can make to your site without degrading server performance.

- Crawl Demand: How much Google wants to crawl your site based on popularity, freshness, and overall site health.

Crawl Budget = Crawl Rate Limit + Crawl Demand

If your site has 100,000 URLs, but only 1,000 are crawled daily, you need to ensure that those 1,000 crawled URLs are the most valuable ones.

Factors That Influence Crawl Budget

A wide range of factors can impact how much of your site is crawled:

A. Site Size

Large websites (e.g., e-commerce sites with thousands of product pages) need to pay close attention to crawl budget.

B. Site Speed and Server Performance

Slow sites discourage crawlers. If a server is frequently overloaded, Googlebot will back off.

C. Duplicate Content

Too many similar or duplicate pages can dilute your crawl budget. Google might waste time on low-value pages.

D. Broken Links and Errors (4xx and 5xx Status Codes)

These create a poor crawling experience and may reduce crawl frequency.

E. Redirect Chains

Too many redirects confuse crawlers and waste crawl resources.

F. XML Sitemaps and Robots.txt

These help guide crawlers to important pages and away from unimportant ones.

G. Orphan Pages

Pages with no internal links are hard to find and often ignored in crawling.

Common Myths About Crawl Budget

There are many misconceptions about crawl budget. Let’s debunk a few:

Myth 1: Crawl Budget Affects Small Websites

If your site has fewer than a few thousand pages, you likely don’t need to worry about crawl budget.

Myth 2: Crawl Budget Directly Impacts Rankings

Crawl budget affects indexing, not rankings. But if important pages aren’t crawled, they won’t be indexed—and that affects visibility.

Myth 3: More Frequent Crawling Is Always Better

Not necessarily. The goal is effective crawling, not just frequent crawling.

Myth 4: Submitting a URL in Search Console Guarantees Immediate Indexing

It helps but doesn’t guarantee anything. Google still uses its own signals.

How Crawl Budget Impacts SEO Rankings

While crawl budget doesn't directly influence rankings, it plays a foundational role in getting pages indexed. Pages not indexed can’t rank—period.

Other indirect ways crawl budget affects SEO:

- Freshness: Regularly crawled sites keep their content up-to-date in search results.

- Discoverability: Well-linked pages are more likely to be found and indexed.

- Index Efficiency: Maximizing crawl budget means faster indexing of high-value pages.

Best Practices to Optimize Your Crawl Budget

1. Use Robots.txt Strategically

Block low-value pages (e.g., admin pages, filter options) from being crawled.

2. Optimize Your XML Sitemap

Include only high-priority, index-worthy pages. Keep it clean and updated.

3. Fix Broken Links and Redirect Chains

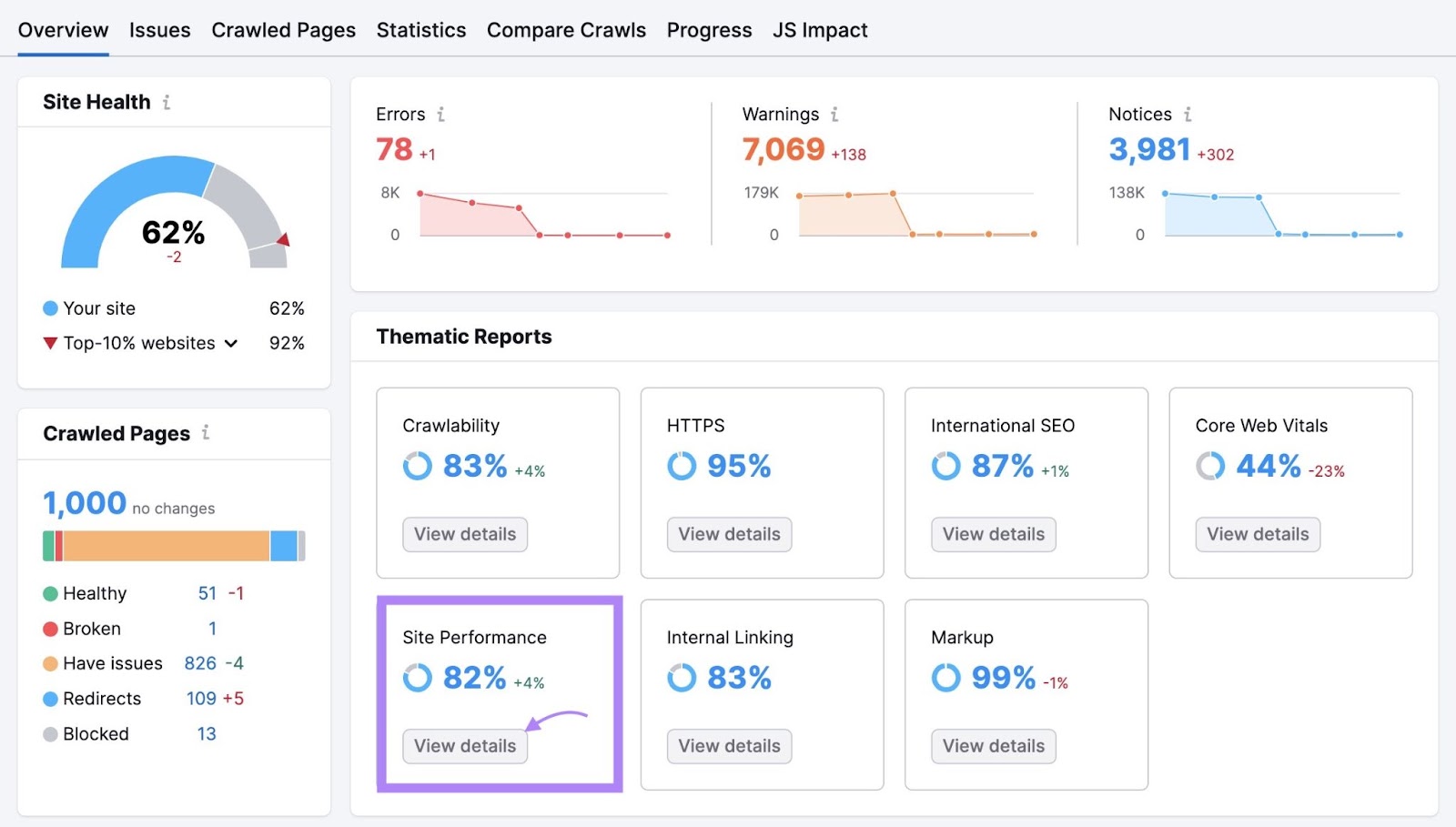

Monitor your site using tools like Screaming Frog, Ahrefs, or SEMrush.

4. Improve Site Speed and Server Response Time

Faster sites can be crawled more thoroughly.

5. Eliminate Duplicate Content

Use canonical tags, noindex directives, and consolidate thin content.

6. Prioritize Internal Linking

Link to important pages more often. Use descriptive anchor text.

7. Consolidate Low-Quality Pages

If a page doesn’t offer value or traffic, either improve it or remove it.

8. Use Pagination Correctly

Follow Google’s guidelines for paginated content to avoid crawl traps.

9. Regularly Audit and Prune Your Site

Over time, old pages accumulate. Removing or redirecting them can streamline crawling.

Crawl Budget for Large vs Small Websites

Small Sites (< 1,000 URLs):

- Likely crawled in full often

- Focus on indexation and page quality

Large Sites (10,000+ URLs):

- Strategic optimization needed

- Must prioritize pages for crawl

- Use log file analysis to understand crawl patterns

Tip: Use Google Search Console's Crawl Stats report to monitor crawl behavior.

Crawl Budget Tools and How to Use Them

A. Google Search Console

- Crawl Stats

- Index Coverage Report

- URL Inspection Tool

B. Screaming Frog

- Visualize crawl paths

- Identify errors and duplicate content

C. Log File Analyzers (e.g., JetOctopus, Logz.io)

- Understand exactly what Googlebot crawled

D. SEMrush & Ahrefs

- Site Audit reports

- Crawl efficiency metrics

FAQs on Crawl Budget

Q: Does crawl budget apply to every search engine?

A: No. Different engines have different crawling behaviors. Google is the most transparent about crawl budget.

Q: Can I request Google to crawl faster?

A: You can request crawling via Search Console, but Google decides based on server and site signals.

Q: What’s a good crawl frequency?

A: There’s no universal number. As long as important pages are crawled and indexed regularly, you're in good shape.

Q: Should I worry about crawl budget for my blog?

A: Unless you have thousands of posts or categories, probably not.

Final Thoughts

Crawl budget is a powerful concept, especially for large or complex websites. While it doesn't directly impact rankings, it influences whether or not your pages can be found and indexed.

By following best practices—like improving site structure, optimizing internal links, and fixing crawl errors—you ensure Googlebot is using its time on your site wisely. That alone can be the difference between an invisible page and a top-ranking one.

Remember: Good SEO isn't just about keywords and backlinks—sometimes it's about letting Google do its job more efficiently.

Need help optimizing your website’s crawl budget or doing a technical SEO audit? Contact Open Sales LLC today for a consultation tailored to your business.

.svg)

.svg)

.svg)

.svg)

.png)